- Home

- About Pixie

- Installing Pixie

- Using Pixie

- Tutorials

- Reference

The move from monolith to microservice architecture has greatly increased the volume of inter-service traffic. Pixie makes debugging this communication between services easy by providing immediate and deep (full-body) visibility into requests flowing through your cluster.

HTTP requests are featured in this tutorial, but Pixie can trace a number of different protocols including DNS, PostgreSQL, and MySQL. See the full list here.

This tutorial will demonstrate how to use Pixie to:

- Inspect full-body HTTP requests.

- See HTTP error rate per service.

- See HTTP error rate per pod.

If you're interested in troubleshooting HTTP latency, check out the Service Performance tutorial.

You will need a Kubernetes cluster with Pixie installed. If you do not have a cluster, you can create a minikube cluster and install Pixie using one of our install guides.

You will need to install the demo microservices application, using Pixie's CLI:

- Install the Pixie CLI.

- Run

px demo deploy px-sock-shopto install Weavework's Sock Shop demo app.- Run

kubectl get pods -n px-sock-shopto make sure all pods are ready before proceeding. The demo app can take up to 5 minutes to deploy.

A developer has noticed that the demo application's cart service is reporting errors.

Let's use Pixie to look at HTTP requests with specific types of errors:

- Select

px/http_data_filteredfrom the script drop-down menu.

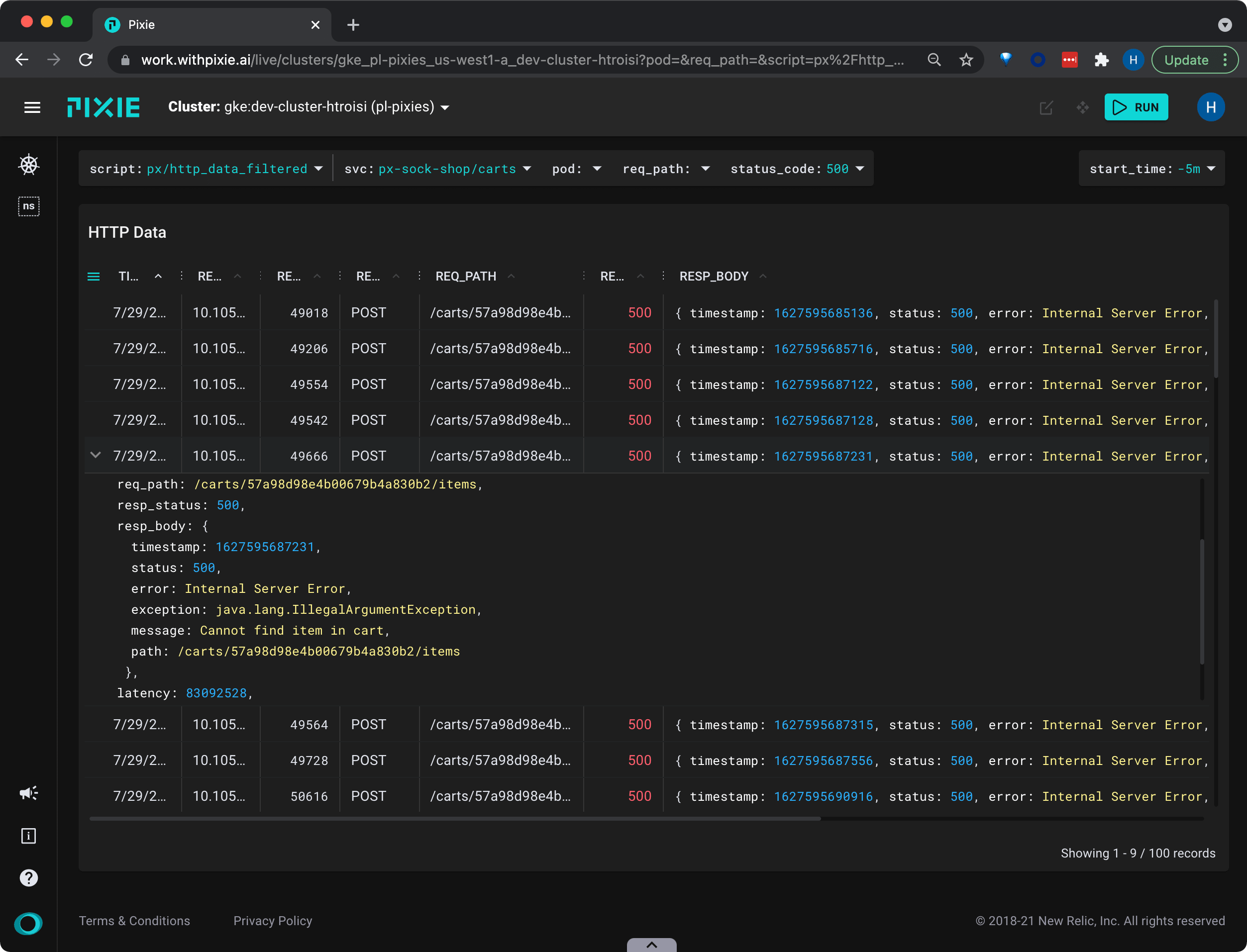

This script shows the most recent HTTP requests in your cluster filtered by service, pod, request path, and response status code.

- Select the drop-down arrow next to the

status_codeargument, type500, and press Enter to re-run the script.

This filters the HTTP requests to just those with the

500status code.

- Select the drop-down arrow next to the

svcargument, typepx-sock-shop/carts, and press Enter to re-run the script.

This filters the HTTP requests to just those made to the

cartsservice.

For requests with longer message bodies, it's often easier to view the data in JSON form.

Click on a table row to see the row data in JSON format.

Scroll through the JSON data to find the

resp_bodykey.

We can see that a HTTP POST request to the

cartsservice has returned an error, with the message:Cannot find item in cart.

Once we have identified a specific error coming from the carts service, we will want to go up a level to see how often these errors occur at the service level.

- Hover over the HTTP Data table and scroll all the way to the right side.

Pixie's UI makes it easy to quickly navigate between Kubernetes resources. Clicking on any pod, node, service, or namespace name in the UI will open a script showing a high-level overview for that entity.

- From the

SVCcolumn, click on thepx-sock-shop/cartsservice name.

This will open the

px/servicescript with theserviceargument pre-filled with the name of the service you selected.

The

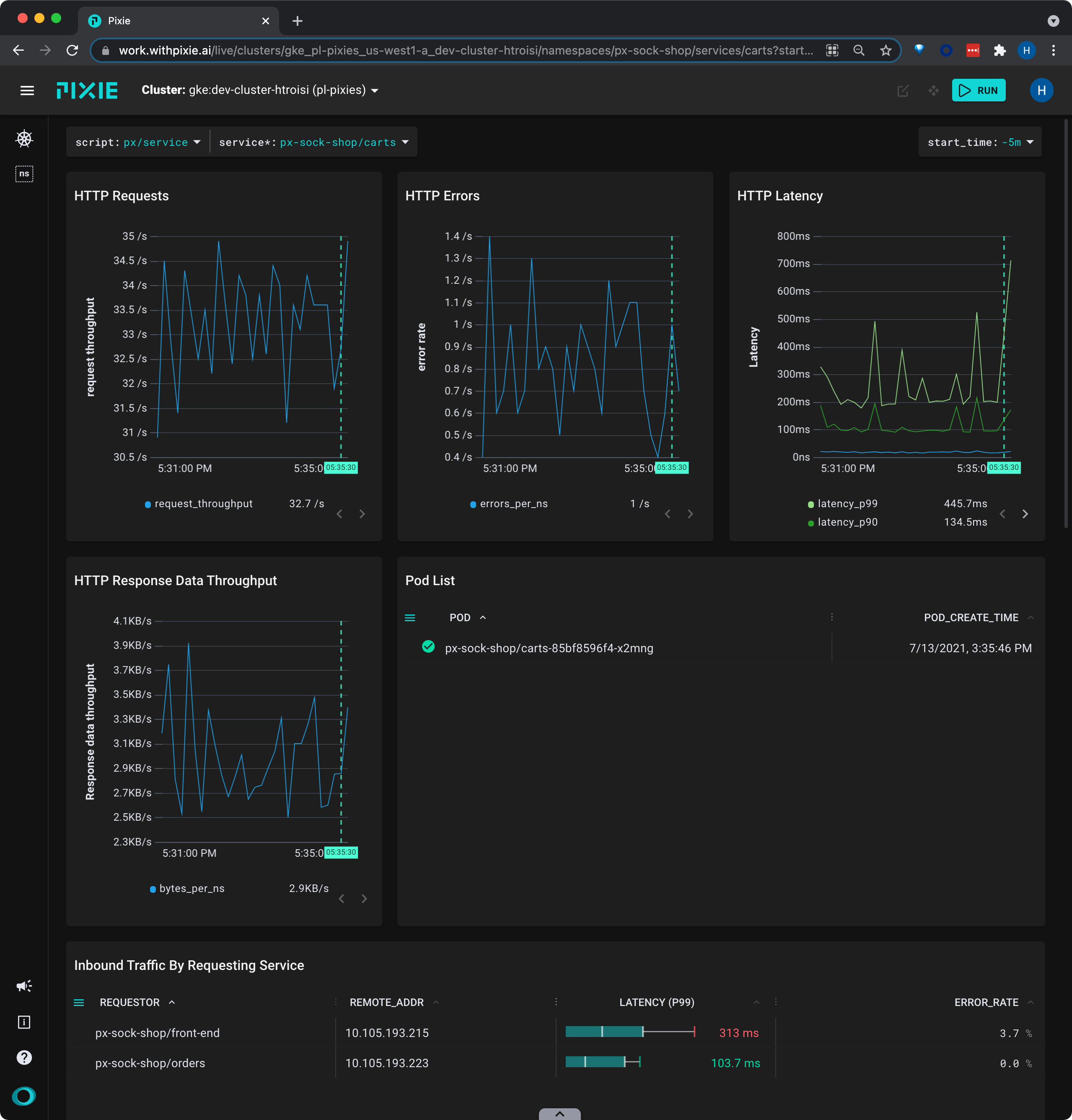

px/servicescript shows error rate over time for all inbound HTTP requests.

We can see that the

cartsservice has had a low but consistent error rate over the selected time window.

- Scroll down to the Inbound Traffic by Requesting Service table.

This table shows the services making requests to the

cartsservice.

We can see from the

ERROR_RATEcolumn that the requests with errors are only coming from thefront-endservice.

If services are backed by multiple pods, it is worth inspecting the individual pods to see if a single pod is the source of the service's errors.

- Scroll up to the Pod List table.

In this case, the carts service is backed by a single pod. If the service had multiple pods, they would be listed here.

- Click on the pod name in the Pod List table.

This will open the

px/podscript with thepodargument pre-filled with the name of the pod you selected.

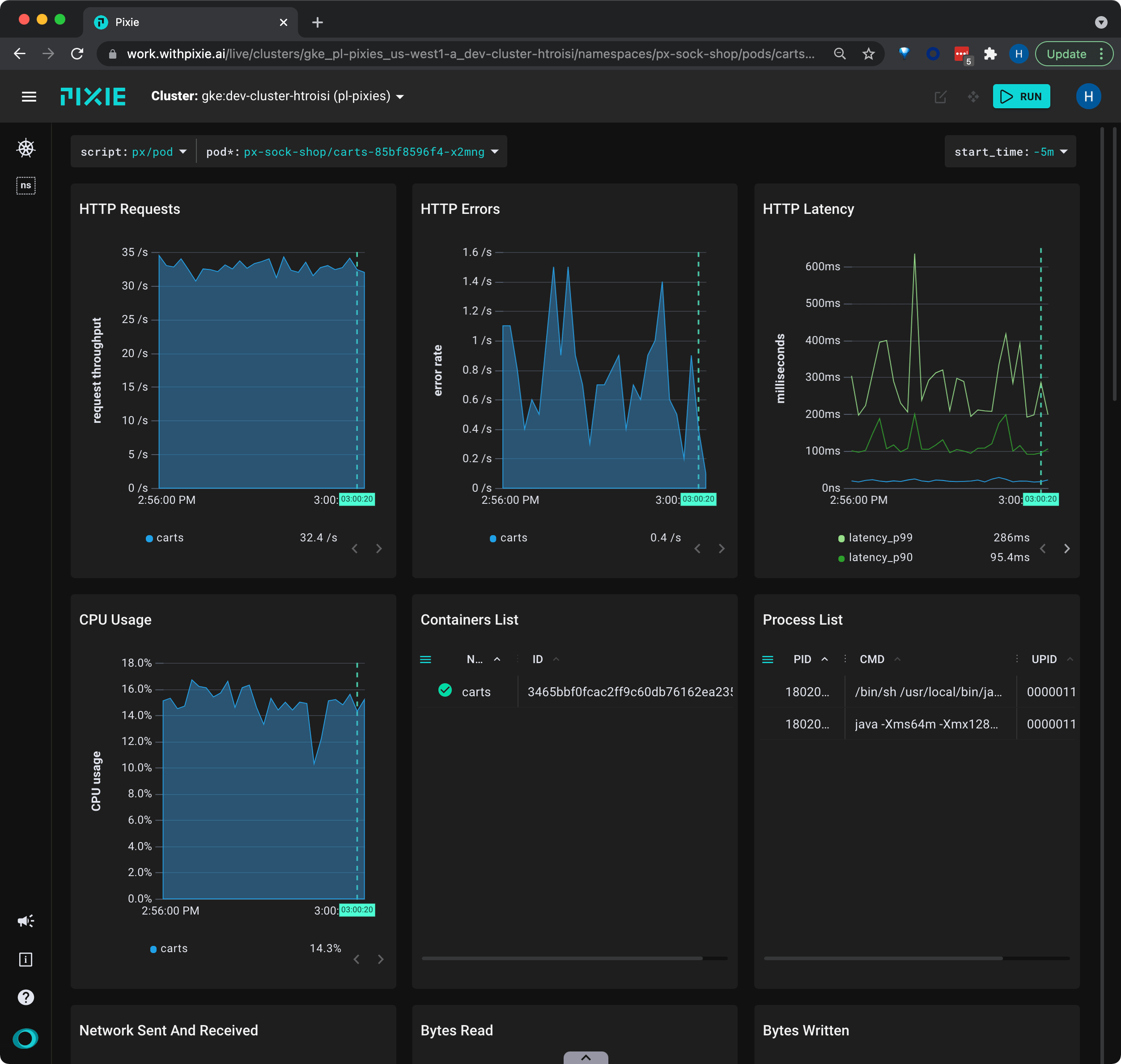

The

px/podscript shows HTTP error rate alongside high-level resource metrics.

We can see that there is no resource pressure on this pod and that the HTTP request throughput has been constant over the selected time window.

Resolving this bug requires further insight into the application logic. For Go/C/C++ applications, you might want to try Pixie's continuous profiling feature. Pixie also offers dynamic logging for Go applications.

This tutorial demonstrated a few of Pixie's community scripts. To see full body requests for a specific protocol, check out the following scripts:

px/http_datashows the most recent HTTP/2 requests in the cluster.px/dns_datashows the most recent DNS requests in the cluster.px/mysql_datashows the most recent MySQL requests in the cluster.px/pgsql_datashows the most recent Postgres requests in the cluster.px/redis_datashows the most recent Redis requests in the cluster.px/cql_datashows the most recent Cassandra requests in the cluster.